Why Microsoft's API Observability failed at the AI level

apidays newsletter reported on March 31st that Microsoft has updated its Secure Development Cycle (SDL) because its API observability fails at the AI (Agentic) level. We explain what fails, why, and how you can fix it.

API Governance Checklist

A strategic guide for software architects, platform engineers, and API leadership looking to solve or upgrade their API Governance Programme.

Download Ebook

API observability was built for deterministic systems, but AI agents don’t behave that way. As multi-step, agent-driven workflows become the norm, traditional monitoring can show everything is “working” while the final output is still wrong or compromised.

The old way of API monitoring answers a narrow, deterministic set of questions:

Did the call succeed?

How long did it take?

What response code came back?

That’s pretty easy to validate. It’s a single, isolated API call that’s easy to track.

But when an agent is the caller, it’s no longer simple (deterministic) like that.

A single task can involve internal and external data, multiple sequences, follow-up calls based on that research, information pulled from different agents, all before returning a single thing.

Each step gives you a 200 response with expected latency–an “all clear” signal. No alerts, everything looks clean.

But the output is still wrong because the agent retrieved incorrect data in step two, and now everything’s built on that bad input. Bad inputs, bad outputs; but only if you spot those bad inputs.

We covered McKinsey’s breach involving its AI Platform Lilli, where hackers inserted malicious prompts into the platform that consultants used as a source of truth and shared that info with their clients. The consultants didn’t even know that something was wrong or that their data was corrupted, so they presented it to their clients as truthful.

If you can’t see (observe) the entire process of how the output happens, you can’t trust the output. It’s why students need to show their proof of work when doing math instead of just writing the solution.

The apidays newsletter, in its March 31 edition curated by Baptiste Parravicini, stated: API monitoring based on latency and error rates does not capture how agents use APIs across multi-step workflows. Without traceability across steps, issues such as prompt injection, incorrect tool usage, or excessive API calls are difficult to detect.

Standard monitoring systems weren't designed to detect logical inconsistencies in multi-step execution. They detect infrastructure failures. Those are different problems.

Preparing your APIs for AI Agents

A strategic guide for architects, developers, platform owners, and digital transformation leaders preparing for a machine-driven API future.

Download Ebook

On March 18, 2026, Microsoft's security team published "Observability for AI Systems: Strengthening Visibility for Proactive Risk Detection." The piece positions observability as a security engineering control, embedded in the development lifecycle.

Microsoft's framing: observability of AI systems means the ability to monitor, understand, and troubleshoot what an AI system is doing, end-to-end, from development and evaluation through to deployment and operation. Observability becomes a requirement you verify, like a security test.

Scale matters a lot here. 80% of Fortune 500 companies have active AI agents in production. When agents are this prevalent, the gap between what traditional API monitoring captures and what governance actually requires becomes a systemic exposure.

API observability for agentic systems means capturing what happens between API calls (data selection, result combination, subsequent request triggers) rather than whether each call succeeded.

Microsoft fixed this problem by adding end-to-end tracing across multi-agent workflows with continuous evaluation of production traffic. The system captures how agents interact, what tools are invoked, and how outputs are generated across steps. They noticed the change and fundamentally changed their architecture to support the new observability model.

The apidays newsletter's analysis of Microsoft's guidance identified the observability requirements for agent-driven systems:

Execution tracking needs to happen at the task level, not the request level. A single user-facing task can generate dozens of API calls. The meaningful unit of analysis is the task the agent was asked to do, rather than an individual call.

Each task needs a persistent identifier that links all the API calls, tool invocations, and agent interactions that belong to it, across services and across agents. Without this, reconstructing what happened when an output is wrong requires manually correlating logs, which is slow and often impossible when the execution chain crosses multiple systems.

Data provenance at retrieval points needs to be captured. When an agent retrieves information from an external or internal source and uses it to generate a response or trigger further API calls, the origin of that data needs to be recorded. Prompt injection is only detectable this way: not from the response code of the API call, but from the source of the data that entered the execution chain and influenced subsequent steps.

Credential usage needs to be monitored throughout execution, not just at the authentication boundary. Access control enforced at the gateway answers whether an agent has permission to call an API.

OpenTelemetry's semantic conventions for AI systems are beginning to standardize how this telemetry is structured — including schemas for agent actions, tool calls, and retrieval steps — which is the infrastructure work that makes these requirements tractable at scale.

API Governance Checklist

A strategic guide for software architects, platform engineers, and API leadership looking to solve or upgrade their API Governance Programme.

Download Ebook

The immediate consequence is that gateway-level instrumentation is no longer sufficient on its own. A team that surfaces latency dashboards and error rate alerts is doing its job correctly and still has no picture of what agents are doing once they have access. Those are two separate observability problems, and the second one has been largely unaddressed because agents at this scale are new.

The practical path runs through three instrumentation layers.

The gateway covers what most teams already have — call volume, authentication, and response codes.

The application layer, where agent decisions are made, covers which tools were invoked and with what parameters.

The data retrieval layer captures where external inputs entered the execution chain.

Each layer answers a different question.

Gateway logs tell you a call was made. Application-layer traces tell you why. Data provenance records tell you which inputs were used. All three are needed to evaluate whether the agent's behavior aligns with the defined policies.

The tooling for this is available, but the blockers are organizational. Observability was simply too spread out to be properly governed.

API Governance Checklist

A strategic guide for software architects, platform engineers, and API leadership looking to solve or upgrade their API Governance Programme.

Download Ebook

Treblle analyzes over 1 billion API requests per month, capturing 40+ data points per request — including authentication context, payload content, response timing, and error signatures. The platform scans every request for 20+ security threat types in real time.

The patterns Treblle surfaces across that volume are exactly the signals that become governance-critical when agents are in the loop. The difference between a human making an unusual API call and an agent making one is that the human's call is an isolated event; the agent's call is one node in a chain. The chain is where the exposure actually lives.

What Treblle has been built to capture maps directly into the instrumentation layer described in Microsoft's guidance. The Treblle platform's approach of covering the full API lifecycle from observability to security and governance scoring reflects the same architecture that is now being described as a requirement for AI systems.

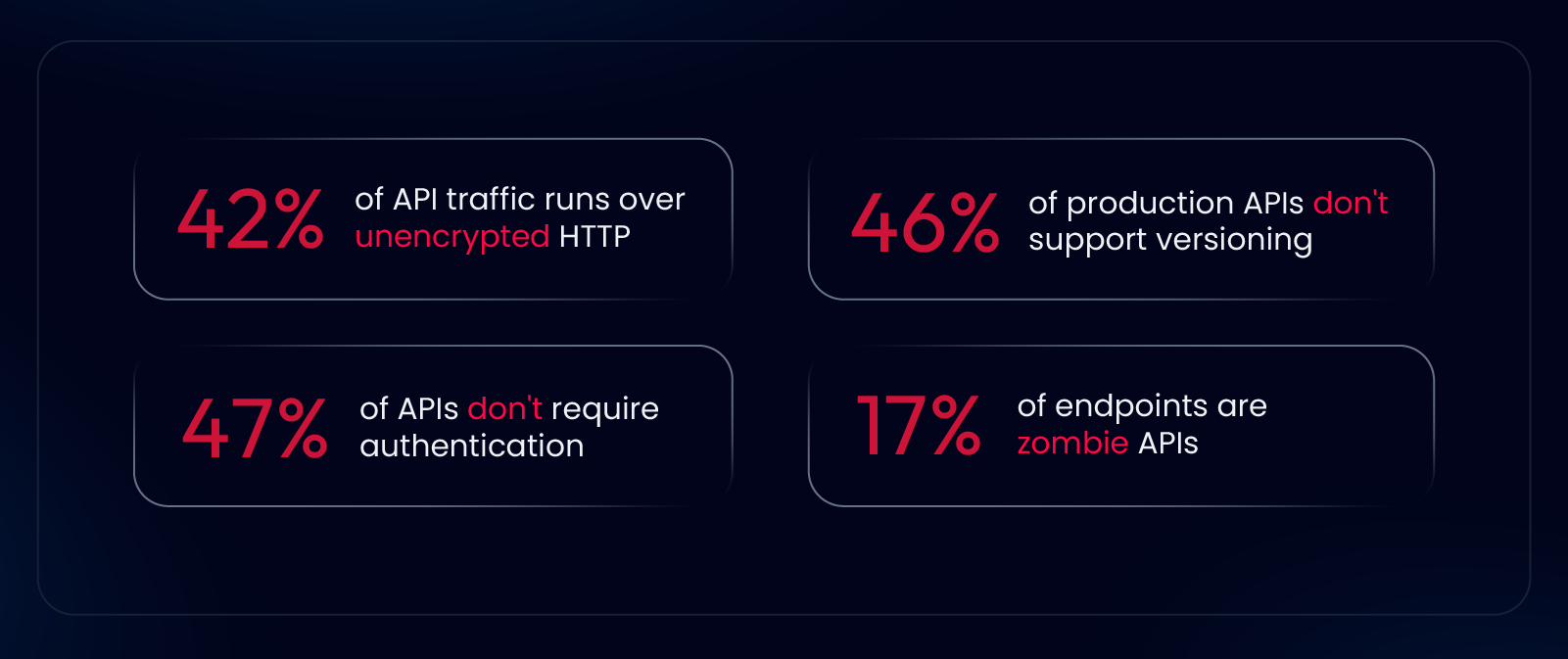

If you want to understand what's happening in your own APIs at the request level, Treblle's Anatomy of an API 2025 report covers what this looks like across a real cross-industry dataset.

API governance has been on the agenda long enough to have accumulated a fair amount of vagueness. Teams have defined governance to mean design standards, versioning policies, documentation requirements, and security review gates. These are real practices. They're also largely applied at design time and at the API boundary.

What Microsoft's guidance makes explicit is that governance over agent-driven systems requires extending the governance boundary to the full execution path. Access control at the gateway is the entry point. What agents do after that point, with which credentials, based on which retrieved data, in what sequence of calls — that's where the actual policy compliance or non-compliance is happening.

Treblle's approach to observability has always treated request-level data as governance data, not just operational data. Security scores, anomaly detection, and policy compliance scoring are all downstream of the same instrumentation. The data captured during API execution is the raw material for governance decisions. That's not a new idea — but Microsoft's security team is now formalizing it in its guidance for AI systems.

<<<CTA(ApiGovernance)>>> <<<CTA(ApiGovernance)>>>

What does API observability mean for AI agent systems?

Why doesn't gateway monitoring cover agent-driven API usage?

What did Microsoft recommend for AI system observability?

What is OpenTelemetry's role here?

How does Treblle address these observability requirements?

All Systems Operational

Gartner: Magic Quadrant, 2025

Gartner AI API Strategy, 2025

Everest Group: Enterprise App Integration Platforms, 2026